MarPOT

A Large-Scale Maritime Panoptic Obstacle Tracking Benchmark

A maritime perception benchmark that unifies detection, panoptic segmentation, and multi-object tracking under temporally consistent instance identifiers.

A Large-Scale Maritime Panoptic Obstacle Tracking Benchmark

A maritime perception benchmark that unifies detection, panoptic segmentation, and multi-object tracking under temporally consistent instance identifiers.

MarPOT is built from six months of real maritime operations across harbor anchorages, coastal channels, and offshore routes.

Harbor anchorages, coastal channels, and offshore routes under varying weather, lighting, and traffic conditions.

Bounding boxes, panoptic instance masks, and temporally consistent IDs — all aligned within the same frame.

Occlusion, specular reflection, severe backlighting, haze, and extreme scale variations baked into the data.

Detection benchmarks provide only boxes. Segmentation benchmarks provide pixel labels but no temporal IDs. Tracking benchmarks lack dense instance masks.

No public maritime dataset previously offered temporally consistent panoptic identifiers.

Object areas span four orders of magnitude. Distant vessels and nearby obstacles coexist in the same frame.

Specular reflection, severe backlighting, fog, haze, and dense shoreline clutter.

Occlusion / truncation attributes, polygonal instance masks, and temporal IDs share a single coordinate system per frame.

Every dynamic instance keeps the same identifier across an entire sequence.

Multiple regions and traffic patterns: dense harbors, transit channels, and sparse open water.

22 semantic segmentation architectures, 2 panoptic frameworks across 6 backbones, and 7 multi-object trackers.

A curated subset of MarPOT frames covering port operations, coastal channels, offshore traffic, and adverse conditions.

RGB

Mask

RGB

Mask

13 anchored ships under low-angle sun with shoreline clutter and partial occlusions.

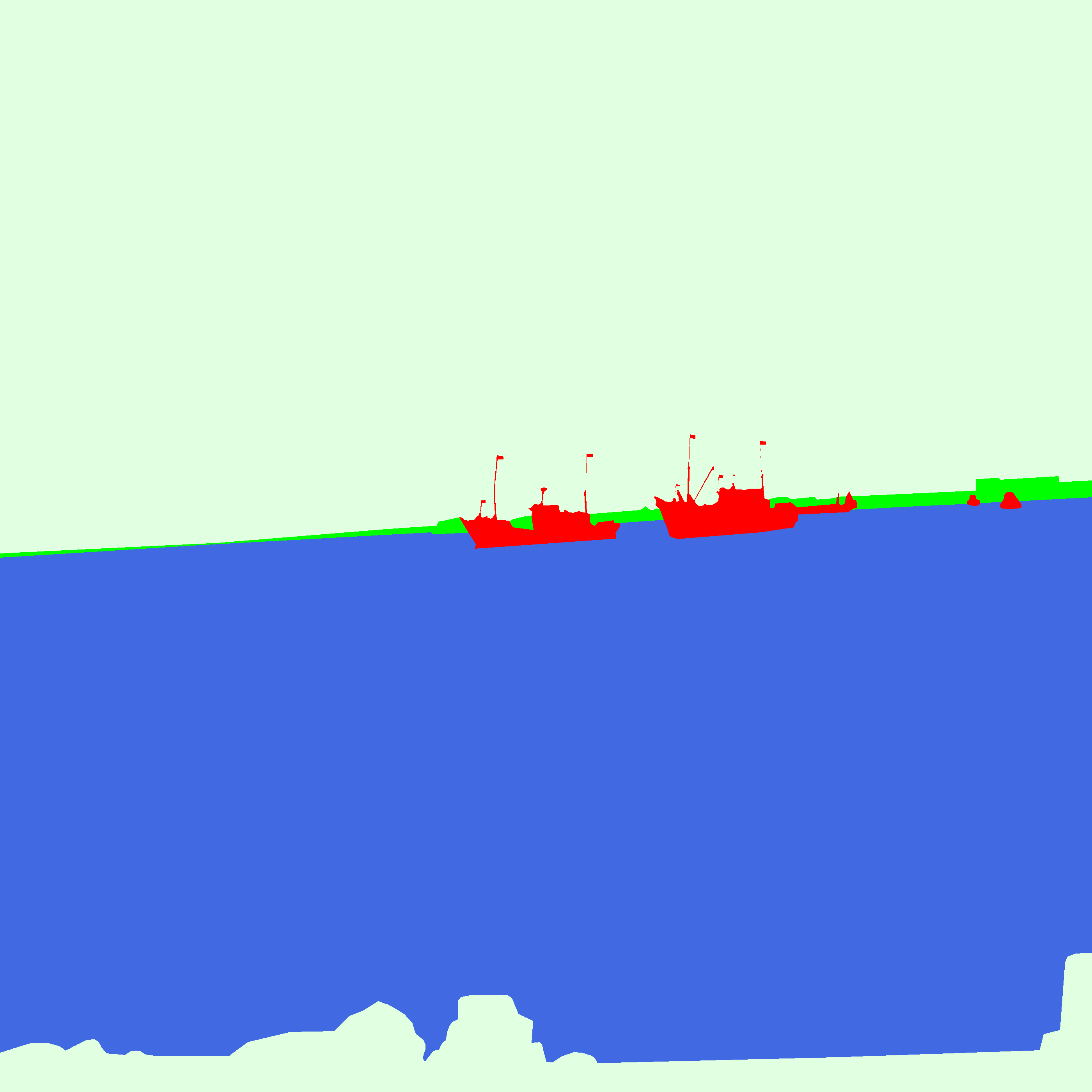

RGB

Mask

RGB

Mask

Backlit fishing vessels moored near the shoreline under strong surface glare.

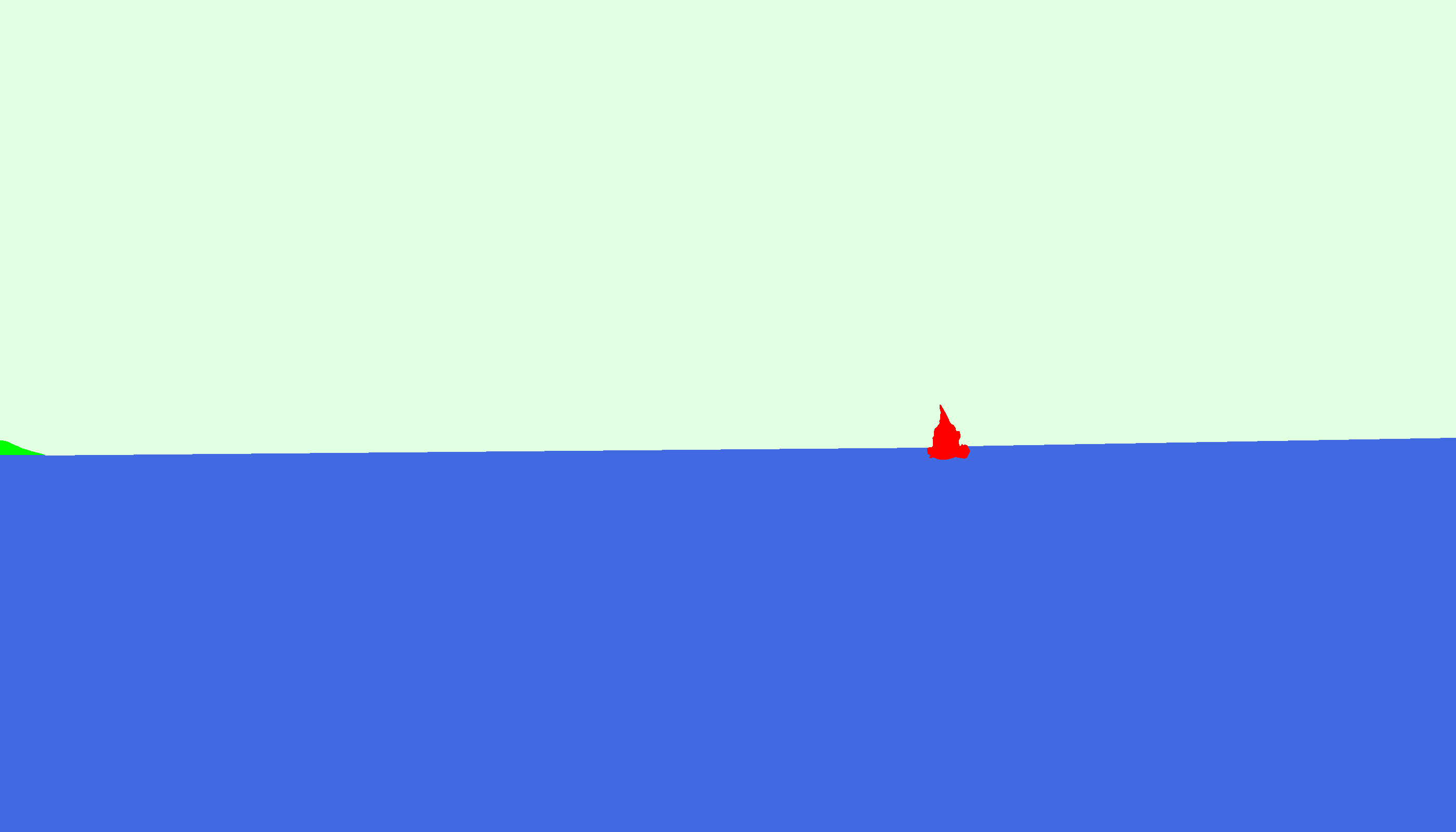

RGB

Mask

RGB

Mask

A small vessel returning with a faint wake under uniform gray sky and sea.

The full release ships in a single archive with images, panoptic masks, COCO-format JSONs, and the evaluation toolkit.

Approx. 25 GB

Approx. 800 MB

Code repository

MarPOT is released for academic research only. Commercial use requires written permission.

If you use MarPOT in your work, please cite:

@inproceedings{marpot2026,

title = {MarPOT: A Large-Scale Maritime Panoptic Obstacle Tracking Benchmark},

author = {Anonymous Authors},

booktitle = {Proceedings of the 43rd International Conference on Machine Learning (ICML)},

year = {2026}

}